Open AI, the company founded by Elon Musk, has just discovered that their artificial neural network CLIP shows behaviour strikingly similar to a human brain. This find has scientists hopeful for the future of AI networks’ ability to identify images in a symbolic, conceptual and literal capacity.

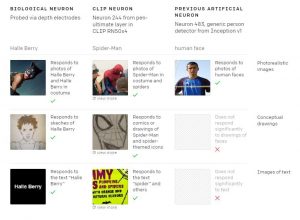

Тhe first biological neuron was the “Halle Berry” one that proved its capability to recognize photographs and sketches of the actress and connecting those images with the name “Halle Berry.”

Now, OpenAI‘s multimodal vision system continues to outperform existing systems, namely with traits such as the “Spider-Man” neuron, an artificial neuron which can identify not only the image of the text “spider” but also the comic book character in both illustrated and live-action form.

This ability to recognize a single concept represented in various contexts demonstrates CLIP’s abstraction capabilities. The capacity for abstraction allows a vision system to tie a series of images and text to a central theme. However, a difference between biological and artificial neurons lies in semantics versus visual stimuli. Whereas neurons in the brain connect a cluster of visual input to a single concept, AI neurons respond to a cluster of ideas.

Photo Credits: Open AI

Research teams examine CLIP along two lines: 1) Feature visualization and 2) dataset examples. The teams have discovered that CLIP neurons seem to be immensely multi-faceted, meaning that they respond to many unique concepts at a high level of abstraction.

As a recognition system, CLIP also exhibits various forms of bias. For instance, the system’s “Middle East” neuron has been associated with terrorism, alongside an “immigration” neuron that responds to input involving Latin America.

In terms of limitations to these findings, scientists acknowledge that, despite CLIP’s ability to locate geographical regions, individual cities and landmarks, the system does not appear to exhibit a “San Francisco” neuron that ties a landmark such as Twin Peaks to the identifier San Francisco.