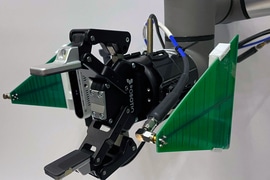

This robotic arm fuses data from a camera and antenna to locate and retrieve items, even if they are buried under a pile.

RFusion could have many broader applications in the future, like sorting through piles to fulfil orders in a warehouse, identifying and installing components in an auto manufacturing plant, or helping elderly people perform daily tasks at home, though the current prototype isn’t quite fast enough yet for these uses. According to the senior author Fadel Adib, associate professor in the Department of Electrical Engineering and Computer Science and director of the Signal Kinetics group in the MIT Media Lab

“This idea of being able to find items in a chaotic world is an open problem that we’ve been working on for a few years. Having robots that are able to search for things under a pile is a growing need in the industry today. Right now, you can think of this as a Roomba on steroids, but in the near term, this could have a lot of applications in manufacturing and warehouse environments.”

Co-authors include research assistant Tara Boroushaki, the lead author; electrical engineering and computer science graduate student Isaac Perper; research associate Mergen Nachin; and Alberto Rodriguez, the Class of 1957 Associate Professor in the Department of Mechanical Engineering. The research will be presented at the Association for Computing Machinery Conference on Embedded Networked Sensor Systems next month.

Sending signals

RFusion begins searching for an object using its antenna, which bounces signals off the RFID tag to identify a spherical area in which the tag is located. It combines that sphere with the camera input, which narrows down the object’s location. For instance, the item can’t be located on an area of a table that is empty.

But once the robot has a general idea of where the item is, it would need to swing its arm widely around the room taking additional measurements to come up with the exact location, which is slow and inefficient.

The researchers used reinforcement learning to train a neural network that can optimize the robot’s trajectory to the object. In reinforcement learning, the algorithm is trained through trial and error with a reward system. Boroushaki also explains:

“This is also how our brain learns. We get rewarded from our teachers, from our parents, from a computer games, etc. The same thing happens in reinforcement learning. We let the agent make mistakes or do something right and then we punish or reward the network. This is how the network learns something that is really hard for it to model.”

In the case of RFusion, the optimization algorithm was rewarded when it limited the number of moves it had to make to localize the item and the distance it had to travel to pick it up.

Once the system identifies the exact right spot, the neural network uses combined RF and visual information to predict how the robotic arm should grasp the object, including the angle of the hand and the width of the gripper, and whether it must remove other items first. It also scans the item’s tag one last time to make sure it picked up the right object.

Cutting through clutter

The researchers tested RFusion in several different environments. They buried a keychain in a box full of clutter and hid a remote control under a pile of items on a couch.

But if they fed all the camera data and RF measurements to the reinforcement learning algorithm, it would have overwhelmed the system. So, drawing on the method a GPS uses to consolidate data from satellites, they summarized the RF measurements and limited the visual data to the area right in front of the robot. Their approach worked well — RFusion had a 96% success rate when retrieving objects that were fully hidden under a pile. Boroushaki noted:

“Sometimes, if you only rely on RF measurements, there is going to be an outlier, and if you rely only on vision, there is sometimes going to be a mistake from the camera. But if you combine them, they are going to correct each other. That is what made the system so robust.”

In the future, the researchers hope to increase the speed of the system so it can move smoothly, rather than stopping periodically to take measurements. This would enable RFusion to be deployed in a fast-paced manufacturing or warehouse setting. Beyond its potential industrial uses, a system like this could even be incorporated into future smart homes to assist people with any number of household tasks, Boroushaki says:

“Every year, billions of RFID tags are used to identify objects in today’s complex supply chains, including clothing and lots of other consumer goods. The RFusion approach points the way to autonomous robots that can dig through a pile of mixed items and sort them out using the data stored in the RFID tags, much more efficiently than having to inspect each item individually, especially when the items look similar to a computer vision system. The RFusion approach is a great step forward for robotics operating in complex supply chains where identifying and ‘picking’ the right item quickly and accurately is the key to getting orders fulfilled on time and keeping demanding customers happy.”

The research is sponsored by the National Science Foundation, a Sloan Research Fellowship, NTT DATA, Toppan, Toppan Forms, and the Abdul Latif Jameel Water and Food Systems Lab.