On February 14, a researcher who was frustrated with reproducing the results of a machine learning research paper opened up a Reddit account under the username ContributionSecure14 and posted:

“I just spent a week implementing a paper as a baseline and failed to reproduce the results. I realized today after googling for a bit that a few others were also unable to reproduce the results. Is there a list of such papers? It will save people a lot of time and effort.”

The post struck a nerve with other users on r/MachineLearning, which is the largest Reddit community for machine learning. A few users also posted links to machine learning papers they had failed to implement and voiced their frustration with code implementation not being a requirement in ML conferences.

The next day, ContributionSecure14 created “Papers Without Code,” a website that aims to create a centralized list of machine learning papers that are not implementable. ContributionSecure14 wrote on r/MachineLearning:

“I’m not sure if this is the best or worst idea ever but I figured it would be useful to collect a list of papers which people have tried to reproduce and failed. This will give the authors a chance to either release their code, provide pointers or rescind the paper. My hope is that this incentivizes a healthier ML research culture around not publishing unreproducible work.”

Machine learning researchers regularly publish papers that describe concepts and techniques that highlight new challenges in machine learning systems or introduce new ways to solve known problems.

Having source code to go along with a research paper helps a lot in verifying the validity of a machine learning technique and building on top of it, however, this is not a requirement for machine learning conferences. As a matter of fact, many students and researchers struggle with reproducing their results.

“Unreproducible work wastes the time and effort of well-meaning researchers, and authors should strive to ensure at least one public implementation of their work exists. Publishing a paper with empirical results in the public domain is pointless if others cannot build off of the paper or use it as a baseline.”

ContributionSecure14 acknowledges that there are sometimes legitimate reasons for machine learning researchers not to release their code.

“If the authors publish a paper without code due to such circumstances, I personally believe that they have the academic responsibility to work closely with other researchers trying to reproduce their paper. There is no point in publishing the paper in the public domain if others cannot build off of it. There should be at least one publicly available reference implementation for others to build off of or use as a baseline.”

In some cases, even if the authors release both the source code and data to their paper, other machine learning researchers still struggle to reproduce the results. This can be due to various reasons. ContributionSecure14 noted:

“I think it is necessary to have reproducible code as a prerequisite in order to independently verify the validity of the results claimed in the paper, but [code alone is not sufficient.”

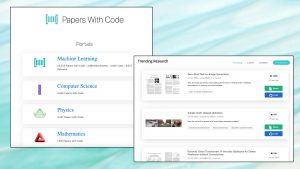

Papers With Code provides a repository of code implementation for scientific papers. Photo credits: TechTalks

The reproducibility problem is not limited to small machine learning research teams. Even big tech companies that spend millions of dollars on AI research every year often fail to validate the results of their papers.

In recent years we have seen a growing focus on AI’s reproducibility crisis. Notable work in this regard includes the efforts of Joelle Pineau, machine learning scientist at Montreal’s McGill University and Facebook AI at conferences such as NeurIPS.

“Better reproducibility means it’s much easier to build on paper. Often, the review process is short and limited, and the true impact of a paper is something we see much later. The paper lives on, and as a community, we have a chance to build on the work, examine the code, and have a critical eye to what are the contributions.”

At NeurIPS, Pineau has helped develop standards and processes that can help researchers and reviewers evaluate the reproducibility of machine learning papers. Her efforts have resulted in an increase in code and data submission at NeurIPS.

Papers With Code is another interesting project. This website provides implementations for scientific research papers published and presented at different venues. ContributionSecure14 said:

“PapersWithCode plays an important role in highlighting papers that are reproducible. However, it does not address the problem of unreproducible papers. If they fail to implement it successfully, they might reach out to the authors (who may not respond) or simply give up. This can happen to multiple researchers who are not aware of prior or ongoing attempts to reproduce the paper, resulting in many weeks of productivity wasted collectively.”

Papers Without Code track machine learning papers that have unreproducible results. Photo credits: TechTalks

Papers Without Code has a submission page, where researchers can submit unreproducible machine learning papers along with the details of their efforts, such as how much time they spent trying to reproduce the results. If a submission is valid, Papers Without Code will contact the paper’s original authors and request clarification or publication of implementation details. If the authors do not reply in a timely fashion, the paper will be added to the list of unreproducible machine learning papers. Papers Without Code can become a hub for creating a dialogue between the original authors of machine learning papers and researchers who are trying to reproduce their work.

For example, if you’re working on a research building on the work done in another paper, you should try out the code or the machine learning model yourself. Another good resource is professor Pineau’s “Machine Learning Reproducibility Checklist.” The checklist provides clear guidelines on how to make the description, code, and data of a machine learning paper clear and reproducible for other researchers.

ContributionSecure14 believes that machine learning researchers can play a crucial role in promoting a culture of reproducibility.